Testing Confirms 10.2x Faster Response Times, Exceeding Cloud-Hosted Alternatives

SAN JOSE, Calif.--(BUSINESS WIRE)--March 17, 2026--

NVIDIA GTC 2026 — Langsmart, the enterprise AI governance company, today announced the successful completion of a rigorous enterprise evaluation with a Fortune 200 financial institution. The testing confirms that Langsmart’s Smartflow platform delivers a 10.2x speedup in response times, achieving sub-300ms latency on standard, low-resource hardware.

This press release features multimedia. View the full release here: https://www.businesswire.com/news/home/20260317721580/en/

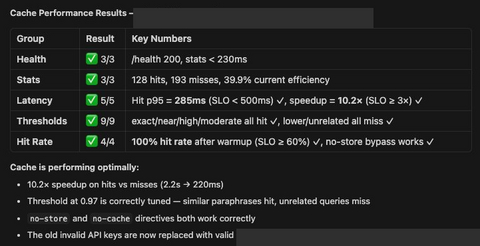

Smartflow semantic cache performance results from enterprise testing with a Fortune 200 financial institution. Testing conducted on a 4vCPU, 8GB on-premises server deployed as a Docker container.

As enterprise adoption of AI gateways accelerates – with analysts projecting 70% of engineering teams will use them by 2028 – the industry has struggled with a lack of standardized performance data. Langsmart’s latest results provide a transparent blueprint for organizations requiring high-performance AI governance within strict on-premises and air-gapped environments.

Enterprise Evaluation: Performance Under Pressure

The evaluation focused on real-world financial services workloads, prioritizing reliability and speed within a secure infrastructure. Unlike cloud-based gateways that require data to leave the perimeter, Smartflow was deployed as a Docker container on a modest 4vCPU, 8GB server.

Key performance milestones included:

- 10.2x Response Speedup – Cached responses were reduced from 2.2 seconds to just 220 milliseconds.

- Sub-300ms p95 Latency – The system maintained a 285ms p95 latency on semantic cache hits, comfortably meeting the sub-500ms Service Level Objectives (SLOs) required by global financial institutions.

- High-Accuracy Cache Hits – Achieved a 40–50% hit rate at a 0.95 similarity threshold, with 100% of tested workloads showing measurable improvement.

- Rigorous Reliability – Smartflow passed 24 out of 24 automated health, latency, and threshold checks.

“For banking, insurance, and healthcare, routing prompts and model responses through a third-party cloud is a liability,” said Craig Alberino, Founder and CEO of Langsmart. “Smartflow eliminates that risk by deploying entirely within the client’s network, delivering performance that actually exceeds cloud-hosted alternatives.”

Raising the Industry Standard: "Show Me the p95"

While the evaluation highlights Smartflow’s technical achievements, it also exposes a critical transparency gap in the AI gateway market. Langsmart’s research found that while many vendors promise efficiency, none currently publish p95 or p99 latency data – the metrics most critical for production-grade enterprise stability.

“Enterprise buyers deserve to see real numbers on real hardware, not marketing claims,” said Alberino. “We are calling on all AI gateway vendors to follow our lead and publish standardized benchmarks. If you’re providing enterprise infrastructure, show me the p95.”

Langsmart’s push for transparency aims to provide CISOs and CTOs with the empirical data needed to evaluate AI governance tools effectively, ensuring that security does not come at the cost of performance.

For the full benchmarking methodology and results, visit langsmart.ai/blog/show-me-the-p95.

About Langsmart

Langsmart is the enterprise AI governance company building Smartflow – the on-premises AI firewall, gateway, and governance control plane for regulated industries. Smartflow enables financial services, healthcare, and insurance organizations to govern AI model traffic at the network layer without data leaving their infrastructure. Langsmart is headquartered in the New York City metro area with teams in Connecticut and Austin, TX.

View source version on businesswire.com: https://www.businesswire.com/news/home/20260317721580/en/

Media Contact

Craig Alberino, Founder & CEO

Langsmart

press@langsmart.ai

www.langsmart.ai