Machine learning algorithms have gained fame for being able to ferret out relevant information from datasets with many features, such as tables with dozens of rows and images with millions of pixels. Thanks to advances in cloud computing, you can often run very large machine learning models without noticing how much computational power works behind the scenes.

But every new feature that you add to your problem adds to its complexity, making it harder to solve it with machine learning algorithms. Data scientists use dimensionality reduction, a set of techniques that remove excessive and irrelevant features from their machine learning models.

Dimensionality reduction slashes the costs of machine learning and sometimes makes it possible to solve complicated problems with simpler models.

The curse of dimensionality

Machine learning models map features to outcomes. For instance, say you want to create a model that predicts the amount of rainfall in one month. You have a dataset of different information collected from different cities in separate months. The data points include temperature, humidity, city population, traffic, number of concerts held in the city, wind speed, wind direction, air pressure, number of bus tickets purchased, and the amount of rainfall. Obviously, not all this information is relevant to rainfall prediction.

Some of the features might have nothing to do with the target variable. Evidently, population and number of bus tickets purchased do not affect rainfall. Other features might be correlated to the target variable, but not have a causal relation to it. For instance, the number of outdoor concerts might be correlated to the volume of rainfall, but it is not a good predictor for rain. In other cases, such as carbon emission, there might be a link between the feature and the target variable, but the effect will be negligible.

In this example, it is evident which features are valuable and which are useless. in other problems, the excessive features might not be obvious and need further data analysis.

But why bother to remove the extra dimensions? When you have too many features, you’ll also need a more complex model. A more complex model means you’ll need a lot more training data and more compute power to train your model to an acceptable level.

And since machine learning has no understanding of causality, models try to map any feature included in their dataset to the target variable, even if there’s no causal relation. This can lead to models that are imprecise and erroneous.

On the other hand, reducing the number of features can make your machine learning model simpler, more efficient, and less data-hungry.

The problems caused by too many features are often referred to as the “curse of dimensionality,” and they’re not limited to tabular data. Consider a machine learning model that classifies images. If your dataset is composed of 100×100-pixel images, then your problem space has 10,000 features, one per pixel. However, even in image classification problems, some of the features are excessive and can be removed.

Dimensionality reduction identifies and removes the features that are hurting the machine learning model’s performance or aren’t contributing to its accuracy. There are several dimensionality techniques, each of which is useful for certain situations.

Feature selection

A basic and very efficient dimensionality reduction method is to identify and select a subset of the features that are most relevant to target variable. This technique is called “feature selection.” Feature selection is especially effective when you’re dealing with tabular data in which each column represents a specific kind of information.

When doing feature selection, data scientists do two things: keep features that are highly correlated with the target variable and contribute the most to the dataset’s variance. Libraries such as Python’s Scikit-learn have plenty of good functions to analyze, visualize, and select the right features for machine learning models.

For instance, a data scientist can use scatter plots and heatmaps to visualize the covariance of different features. If two features are highly correlated to each other, then they will have a similar effect on the target variable, and including both in the machine learning model will be unnecessary. Therefore, you can remove one of them without causing a negative impact on the model’s performance.

The same tools can help visualize the correlations between the features and the target variable. This helps remove variables that do not affect the target. For instance, you might find out that out of 25 features in your dataset, seven of them account for 95 percent of the effect on the target variable. This will enable you to shave off 18 features and make your machine learning model a lot simpler without suffering a significant penalty to your model’s accuracy.

Projection techniques

Sometimes, you don’t have the option to remove individual features. But this doesn’t mean that you can’t simplify your machine learning model. Projection techniques, also known as “feature extraction,” simplify a model by compressing several features into a lower-dimensional space.

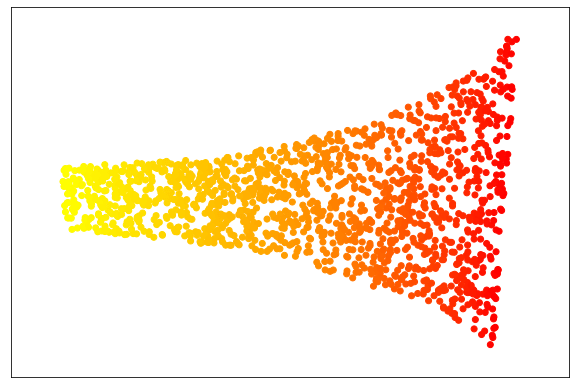

A common example used to represent projection techniques is the “swiss roll” (pictured below), a set of data points that swirl around a focal point in three dimensions. This dataset has three features. The value of each point (the target variable) is measured based on how close it is along the convoluted path to the center of the swiss roll. In the picture below, red points are closer to the center and the yellow points are farther along the roll.

In its current state, creating a machine learning model that maps the features of the swiss roll points to their value is a difficult task and would require a complex model with many parameters. But with the help of dimensionality reduction techniques, the points can be projected to a lower-dimension space that can be learned with a simple machine learning model.

There are various projection techniques. In the case of the above example, we used “locally-linear embedding,” an algorithm that reduces the dimension of the problem space while preserving the key elements that separate the values of data points. When our data is processed with the LLE, the result looks like the following image, which is like an unrolled version of the swiss roll. As you can see, points of each color remain together. In fact, this problem can still be simplified into a single feature and modeled with linear regression, the simplest machine learning algorithm.

While this example is hypothetical, you’ll often face problems that can be simplified if you project the features to a lower-dimensional space. For instance, “principal component analysis” (PCA), a popular dimensionality reduction algorithm, has found many useful applications to simplify machine learning problems.

In the excellent book Hands-on Machine Learning with Python, data scientist Aurelien Geron shows how you can use PCA to reduce the MNIST dataset from 784 features (28×28 pixels) to 150 features while preserving 95 percent of the variance. This level of dimensionality reduction has a huge impact on the costs of training and running artificial neural networks.

There are a few caveats to consider about projection techniques. Once you develop a projection technique, you must transform new data points to the lower dimension space before running them through your machine learning model. However, the costs of this preprocessing step are not comparable to the gains of having a lighter model. A second consideration is that transformed data points are not directly representative of their original features and transforming them back to the original space can be tricky and in some cases impossible. This might make it difficult to interpret the inferences made by your model.

Dimensionality reduction in the machine learning toolbox

https://youtu.be/AU_hBML2H1c

Having too many features will make your model inefficient. But cutting removing too many features will not help either. Dimensionality reduction is one among many tools data scientists can use to make better machine learning models. And as with every tool, they must be used with caution and care.

Ben Dickson is a software engineer and the founder of TechTalks, a blog that explores the ways technology is solving and creating problems.